Built for environments too complex for black-box AI

Wild Moose deploys specialized AI micro-agents that investigate alerts, surface root causes, and suggest fixes in under a minute.

The only AI SRE built around an open, customizable agent environment

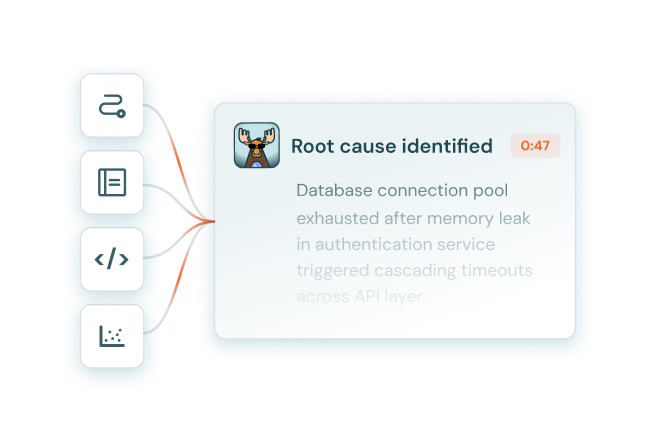

Triage agents activate

When an alert fires, Wild Moose activates triage agents to run checks, pull evidence, and narrow down the most likely root cause.

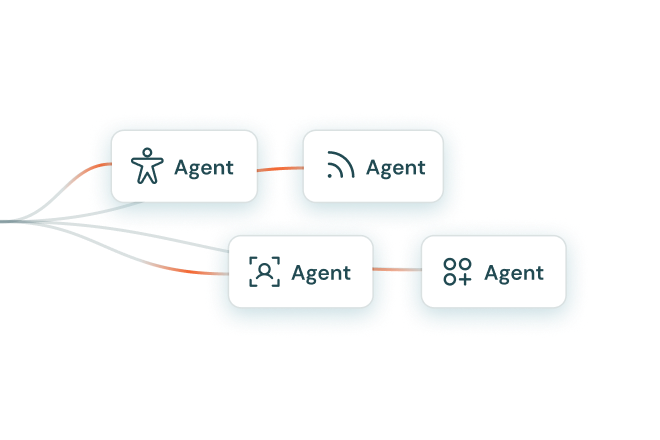

Specialized agents deploy

It then triggers additional specialized agents based on the signals it finds, so the investigation stays focused and root cause is found in under a minute.

Gaps become new agents

If the investigation surfaces a gap, it becomes a new or updated agent, committed as a pull request and validated against past incidents before it ever runs in production.

Accuracy improves continuously

The result is a system where accuracy and coverage improve continuously.

AI-driven investigations you can test, audit, and trust

Wild Moose is engineered to the same reliability standards as your observability systems: agents treated as code and validated against real past incidents.

Investigate in under a minute

Every agent's findings are synthesized into a structured root cause analysis, including key signals, ranked hypotheses, and next steps.

Deploy specialized micro-agents

On alert, a system of cooperating AI debugging micro-agents launch a parallel investigation across your connected tools, fully tailored to your environment and incident patterns.

Build and manage agents as code

Micro-agents are built and managed as code and tested against real past incidents, so your investigation logic is auditable, improvable, and never a black box.

Test on past incidents

For the first time, make your incident response policies visible and testable. Teams validate investigation logic before production so when incidents occur, agents behave as expected.

Surface recurring issues, before they escalate

The system's efficient micro-agent architecture is lightweight enough to run on every alert and to backtest freely, shifting incident response from reactive to preventative.

Trace every conclusion back to the source

Every investigation step is visible: data sources queried, findings, AI reasoning, and recommendations. Agents are defined as inspectable YAML code, not opaque models.

A test-driven approach to systematizing production debugging

Wild Moose gets up to speed in hours, not weeks, and continues to improve with every alert.

Connect your observability tools, code repos, and CI/CD pipelines, and Wild Moose ingests your historical incident data to understand your environment, failure patterns, and stack before it sees its first live alert.

Easy to implement, easy to maintain

Agents are built and managed as code, enabling AI coding assistants to accelerate future agent creation over time. Describe the check you need, test it against historical incidents, and iterate until it performs reliably in production, in natural language directly from Slack, auto-translated to code.